Ok, for you to enjoy this blog post I’m pretty confident that you have to be a bit of a JavaScript, Python and map geek. At least you will have to watch this presentation from FOSS4G 2014 by Eric Theise.

Ok, you watched it? You got his point? My takeaway was that as you generate map tiles further down in the zoom stack you’ll have to generate a ton of tiles, but in many cases you only want to show a map that puts your town/buisiness/whatever on the map. But, you would like some context, and then you have to set the bbox in TileMill WIDE. And then the tileset gets large, and you don’t bother.

So, I thought that there should be a way to limit a web map library to only request tiles that are within a given bounding box. Turns out that Leaflet has this option, set the “bounds” parameter on an L.TileLayer and you are good to go?

L.TileLayer('http://{s}.tile.osm.org/{z}/{x}/{y}.png', {

attribution: '© OpenStreetMap contributors',

bounds: bounds

}).addTo(map);

Well, the user can still pan away from the area, so set “maxBounds” on the map as well:

var map = L.map('map', {maxBounds: bounds}).setView([63.4, 10.3], 0);

This works, the amount of requests sent to the tile server is reduced, but it doesn’t look good, does it:

The main problem is that L.TileLayer only loads tiles that intersects with the bounding box you’ve set (the small, blue square on my map). So, we’ll have to do something about that, and after a bit of digging I found that the “_tileShouldBeLoaded” method of L.TileLayer performs this check (at least in version 0.7.3). Thankfully, Leaflet classes are easy to subclass, and so “L.BoundedTileLayer” was born.

To get more context around I wanted to show some neighboring tiles as well as those that intersects the bounds. So, after we check for intersection we perform one additional check, which I think is best described in code:

//the distance from the tilecenter lng to closest bounds edge

var lngDist = Math.min(

Math.abs(tileCenter.lng - options.bounds.getEast()),

Math.abs(tileCenter.lng - options.bounds.getWest())

);

//the distance from the tilecenter lat to closest bounds edge

var latDist = Math.min(

Math.abs(tileCenter.lat - options.bounds.getSouth()),

Math.abs(tileCenter.lat - options.bounds.getNorth())

);

//these are probably equal

var tileWidth = Math.abs(nw.lng - se.lng);

var tileHeight = Math.abs(nw.lat - se.lat);

//check if tile should be loaded

return lngDist < tileWidth && latDist < tileHeight;

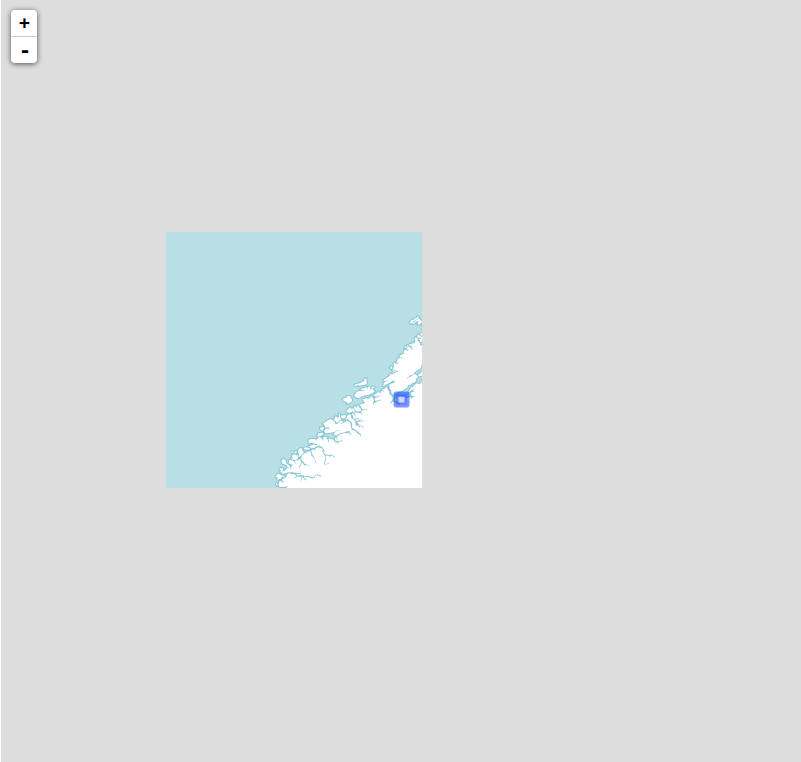

In essence, I check if the distance from the distance from the center of a given tile is smaller than the width or height of a tile. This can probably be optimized a bit, and I can almost guarantee that there are corner cases that I've missed, but it seems to work as expected:

Now we get more context, and we still limit the amount of tiles requested. Happy?

No: we still have to generate all those tiles, because we can't know what tiles the client will request. So, ditching JavaScript and Leaflet for a bit I switched to Python and tried to find a way to generate a list of all tiles the client would request using L.BoundedTileLayer for a given bounding box.

Luckily some smart people have already tackled the maths involved in computing tile indexes from bounding boxes, and the open source script I found at http://www.maptiler.org/google-maps-coordinates-tile-bounds-projection/ was a good starting point. After that it was just a matter of porting the logic from L.BoundedTileLayer to Python, free myself from the Leaflet context and write some 30 lines of code (3-4 hours of trial and error, I don't know how many times I've mixed up axis ordering!).

In the end I could throw a bounding box and a minimum and maximum zoom level at my script and get back a list in the form of:

[

{"z": 0, "y": 0, "x": 0},

{"z": 1, "y": 0, "x": 0},

{"z": 1, "y": 1, "x": 0},

...

]

But this doesn't help me much, I want to generate these specific tiles using TileMill! So, then I started researching how to do that. Nothing came up, except this page describing how to export tiles from TileMill via the command line. I studied the (lack of?) documentation, before I started digging into the source of TileMill and TileLive (which TileMill uses to use Mapnik to render tiles), but after 3 hours I was going nowhere, and it did not work. The next day I asked on stackexchange, and a couple of hours later it dawned on me (to quote my own answer): "that the option --list=[file] could be what I was after."

After digging a bit through the tilemill and tilelive source code i found that this options is (not suprisingly) used by the filescheme renderer (it says that in the comments), and some further digging in the source code to figure out the format of the file, I was able to tell TileMill to render a specific set of tiles.

Success!

Well, a bit on streamlining was called for, so I cleaned up stuff a bit, created a config file for input and looked into calling the TileMill export function from my Python code. This all worked fine, and pretty soon I could type "python tile.py -c config.json" and 3 minutes after have an .mbtiles file of about 14 MB that covers the Trondheim municipality, zoomable from zoom level 0 through 18. Neat!

Then, the moment of truth. Would the tileset I generated line up with what my Leaflet layer requested? I installed TileStache, and 30 seconds later I had a tileserver running at localhost, serving my generated .mbtiles file to my Leaflet map! And it worked!

So, why should you care? Well, I for one, think this is cool by itself, but I also see some useful applications for this. The components I've built now are not far away from the point where I can invoke the generation of a new tileset from a web map. If I do this i can load up a PostGIS database with up-to-data, detailed map data (and refill it through a cron-job or somethin), define the cartography in CartoCSS, and generate a small tilecache for small projects on-the-fly, when needed.

The only drawback is that I have to learn CartoCSS properly to do this, but I guess that's the topic of another blog post.

Do you have any ideas how this can be used, improved or built upon? Feel free to let me know, either in the comments here or via a pull request to the GitHub repo!